This two-part article is about the application of Procedural Generation process when creating the content for the Oil Rush naval strategy game and Valley benchmark. Both of them are developed by means of our own game engine called UNIGINE. All the information set forth below represents authors’ personal opinion and has no intention to be an absolute truth or describe the whole situation.

The second part can be found here.

Besides the theoretical part, content creation process and issues we faced, there are some source materials (procedural textures) free to download. They can be used for your own needs with or without modifications (however, they cannot be distributed for a fee, per se or as part of any library).

Authors made a decision not to prepare, optimize or simplify given materials in any way in order not to embellish the real situation. These materials are taken from the real work space with tough time limits and endless flow of rework requests from the customer (no matter who was it: an Art Director, a Producer or a Publisher). We do believe that whoever finds these materials useful for his project, will easily understand them and modify in their own way.

THE PAIN TRADITIONAL TEXTURING CAN BRING

Typically, when creating a texture, artists use two common methods or their combination. The first method is based on using real photos with following retouching and slight modifications. The second method is to create a texture on a pen tablet completely from the scratch. Both of these methods along with their combination come with a huge drawback – inability of the fast texture variants iteration. These textures are out of even minimum parametrization that leads to impossibility of the fast changes by editing these parameters. As a result, even small change of the diffuse texture requires to make changes in all of other types of textures for the same object (this includes at least Normal and Specular maps for modern 3D content). The only modification that’s possible to do quickly is to change brightness, contrast or the color of some particular areas.

Besides, these methods result to the monotonous work such as making one brush stroke after another for a very long time, looking through heaps of photos just to find the right one, retouching to fix the problem elements, making the pictures borders seamless and so on. All this routine work can be avoided by appropriate Procedural Generation using.

Procedural Texture Generation is the computer generation of textures; it refers to the color of each pixel generated algorithmically rather than manually. This allows to use various functions such as Perlin noise or Fortune’s algorithm.

WHY USE PROCEDURAL TEXTURING?

A number of Procedural Generation advantages in comparison with linear methods is listed below:

- Non-destructive editing. Changes can be made at any time and on any stage of image creation. Almost all procedural generation applications are node-based. You can create a chain of operations by adding simple blocks and organizing them into the complex sequences. Such an architecture allows to store all the sequences of texture formation and keeps all the dependencies between the resources, gives an opportunity to use the same resource (initial texture or output result of any block) as an input for any consequent block. It also allows to switch from one color space to another (for example, disassemble the image to RGB or HSL components). Consequently, even complex node graphs are easily readable and offer no difficulty for other artists to look into the texture generation process and modify it if needed.

- Resolution independency. Textures can be rendered without any loss of details and with the same quality no matter what output resolution is selected in the generator. Unlike bitmaps, procedural textures is mathematically described, that’s why they do not suffer from the disadvantages of any resampling methods. E.g. if we have procedural texture, based on fractal noise function, and want to increase this texture resolution, then only the number of solutions of equation for each pixel color that would be changed. According to this, it’s quite easy to match texture resolution to the needed 3D model scale.

- Rapid unique content creation with the help of existing developments by modifying or combining already existing node graph blocks.

- Fast similar textures creation. As soon as one procedural generator, forming required texture, is fully implemented, it’s quite easy to generate any amount of similar textures. All you need is to change seed value of the Random Number Generator. Due to this fact, if you have, for example, a texture generator for a bronze statue, you can generate textures for any amount of similar statues.

- By seed value of the Random Number Generator changing you can quickly overview texture variants and choose the most appropriate from all of generated ones.

- Fast final image modification by changing nodes parameters at any stage of the work.

- Base texture creation. You can create a texture (e.g. surface texture), convert it into a bitmap image and apply it for further painting it in a bitmap editor of you choice.

- Automatic seamless tiling. Usually procedural texture generators come with seamless tiling algorithms, allowing to get rid of the seams by setting up just one checkbox.

- Generation of the High Dynamic Range (HDR) images with its following adjustable conversion to the Low Dynamic Range (LDR). In case of Procedural Generation, calculations are performed in floating point values, and bits per pixel limitations does not apply.

- Convenient batch processing. If several images need to be processed by the same algorithm, you are not limited to the filters, available in the application. It is possible to implement any desired algorithm and apply it to any amount of source images you need.

- Source file size doesn’t rely on image resolution. It takes only a few kilobytes in size to describe the algorithm on the contrary of the bitmap images, requiring 4 bytes per pixel for each layer.

- Small memory footprint. Since one texture can be used as input for multiple processing nodes, the program uses only one instance of the texture rather than storing several copies of it.

In addition to the problems, mentioned to traditional methods, there are some tasks, that simply cannot be accomplished in the bitmap graphic editors like Photoshop or GIMP. One of them is textures generation for terrains taking place in almost every 3D environment. These textures are huge, 8192×8192 pixels is a common size (UNIGINE Engine allows high-resolution terrain textures up to 65536×65536 pixels for real-time 3D applications). It would take weeks to draw such a texture, and even minor modifications will require days to be done. Such a big time delays and not to mention inconvenience of working with such a huge images are hardly acceptable to anyone.

A way terrain textures creation process looks like without using procedural generation:

- High poly terrain of needed size is generated in the special 3D sculpting application.

- All textures, such as height maps, normal maps, ambient occlusion, various masks (e.g. water surface mask) are baked.

- All generated textures are imported to the one (Photoshop/GIMP) document layer by layer.

- According to the baked textures, masks for different surfaces (mountains, stones, grass) are formed. Main disadvantages of the bitmap image editors we’re facing with:

- There is no image reference concept, which means impossibility of using references as instances when generating new layers. Implementing of the Smart Objects in Photoshop improved the situation, but they are far from covering all use cases: they still cannot be used as masks for any layer. As a result, one bitmap image needs to be copied many times, and the artist has to keep all dependencies and connections in mind. If a source image is changed, the artist has to make the process over again.

- Often it’s required to create a set of layers not only for colors, but also for alpha-channel. But there is a lack of opportunity of using alpha channel as a mask for other layers. A workaround is to create a number of “nested layers folders with masks” that is not always applicable.

Even a powerful PC can hardly handle loading and editing of such huge textures due to the lack of RAM, constant data caching and a big amount of calculations. It forces artists to close background programs and reduce the number of Undo steps. Also it forces to split terrain textures by types (water, sand, grass), work separately with each of them and combine them only at the latest stage of the work. Doomed to tedious work and waiting while a texture is processed, artists cannot produce high quality results they are capable of.

Photoshop, being an industry-standard application for texture creation and editing, unfortunately is targeted mostly to photographers and retouchers. No substantial efforts were made to introduce non-destructive editing or image parametrization. That makes working with Photoshop difficult not only for 3D artists, but also for GUI designers, 2D artists and web-designers.

Since GIMP came out, it was trying to replicate Photoshop functionality on even less user-friendly UI (though it could be expected from a free software), rather than focusing on their own solutions and try to implement non-destructive approach to image editing.

We found these problems of currently available bitmap editors the most actual:

- Inability of using existing images across in different places (reference/instance mechanism is not implemented);

- Inability of using separate layers for masks.

PROCEDURALLY GENERATED OIL RUSH CONTENT

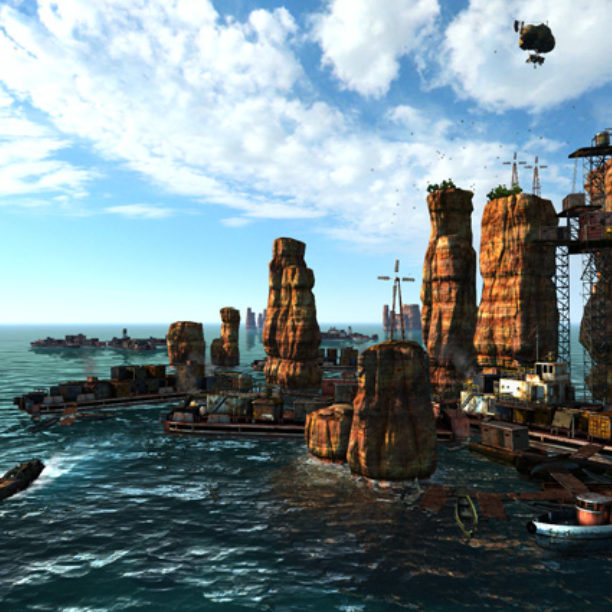

We made a choice to use Procedural Generation while working on Oil Rush naval strategy, taking place in the flooded post-apocalyptic world. We faced the problem of creating a realistic environment for six unique settings: the Antarctic ocean, fishermen villages in the tropics, settlements among the canyons, the industrial zone, flooded city ruins and reefs. These environments have different relief and climate conditions, and feature special types of buildings. We wanted the content to look natural and diverse, and, of course, to release the game at the earliest possible date, that’s why we came up with the idea of using the Procedural Generation.

Before starting to work with Procedural Generation for creating the content, you need to think about physics that drives object or phenomenon in the first place. You need to separate out key processes that form its appearance and simplify them as much as possible.

Having tried almost each and every procedural generator available on the market at that moment, we decided that Filter Forge best suited our needs. Features of this program were used in creating a content for the environment formation and for some special effects, including:

Environment

- Ocean waves

- Icebergs

- Canyons

- Rocks

- Ice floes

- Ocean floor

- Oil slick

- Ruins

- Distribution masks to draw drift weeds, floating ice and debris

- Vegetation on rocks

Effects

- Foam and spatter

- Trails on the water from moving units

- Light flashes (e.g. welding)

- Spreading oil spills

OCEAN WAVES

Creating realistic waves is not a trivial task when handling it using linear methods. As waves textures cover vast spaces, they have to be tiled efficiently. The less visible the pattern is, the higher the visual quality of the water will be. It’s quite difficult to generate normal and height maps for the water surface representation using only water photos in the CrazyBump and nDo2 applications, because of the water transparency, reflection and refraction. Of course, waves can be sculpted one by one and tiled in such applications as ZBrush, Mudbox or 3D-Coat, to be baked into a normal map, but that will waste you a huge amount of time.

Since procedural generators is obviously the only reasonable choice here, we should do phenomenon physics research. Waves are created when wind blows. Compared to air, the water is much more inert, hence, waves are formed only if the wind blows constantly on this area. So, the key parameters here are direction, area size, and wind intensity. Together they set a direction, height, and width for each wave. Also we should keep in mind that ocean surface is exposed to vibrations and waves overlapping.

We have simplified the physical phenomena model and made two types of waves, for strong and gentle wind, each of those required two normal maps with different main waving directions.

In the UNIGINE Engine, a water shader simulates waves by overlapping two normal maps with animated texture transformation parameters. Texture coordinates change in runtime according to its own law for each texture, enabling to form an unique area of ocean surface at any time.

NORMAL MAPS FOR SIMULATING STRONG WIND IN OIL RUSH

Download 1_ocean_waves.ffxml

ICEBERGS, CANYONS, ROCKS, AND ICE FLOES CREATION

In this case traditional linear tools for content generation were inappropriate for us, because of two reasons:

- It was necessary to generate a big amount of environmental objects variations as soon as possible;

- It was initially important to preview objects forms, select ones that entirely meet our needs and unhesitatingly get rid of unsuitable ones.

Nowadays, the state of affairs in the procedural generators for creating 3D objects is far from what we wanted to. It is entirely possible the situation will change if any scripting language will be implemented in the 3D-Coat voxel editor API, which will allow to use algorithms for 3D models generation. Currently, we recommend everyone who are interested in to try Houdini by Side Effects Software and Voxelogic Acropora. But for our project we decided to use a 2D procedural generator, Filter Forge, with some trick. We have implemented a filter that forms a height map for icebergs, then, in the 3D software, we made a displacement of a high-poly mesh by that map.

Diffuse textures for objects have been created in Photoshop based on existing textures of ambient occlusion, heights, and cavity, as here there are more convenient tools for composing several images rather than in Filter Forge. In Photoshop you do not have to quit the filter every time you need to render and save the image and there is an option of recording routine and frequently used actions sequences (e.g. saving a file in the required format) and repeat them automatically by pressing the hotkey, previously assigned to this recorded action sequence. Therefore, you can preview the resulting color texture much faster and easier.

Canyons, rocks, and ice floes have been created the same way.

Canyons in Oil Rush

The height map has been used to form a relief for several rock elements, which then have been combined to form reefs.

Forming rocks and making their relief

Download 6_canyon_top.ffxml

Ice floes in Oil Rush

OCEAN FLOOR CREATION

Filter Forge is pretty good for generating small terrain objects with low-rise relief and weak erosion effect (for other purposes, it is more efficiently to use special terrain generators). As for Oil Rush, this software has perfectly suited for creating an ocean floor texture.

OIL SLICK CREATION

To simulate an oil slick, we had to lay a colored semi-transparent image over the ocean surface. The UNIGINE Engine allows to adjust a rendering order for materials to be appeared on the screen. So, we have made an oil slick appear over a volumetric object that forms water depth effect, but under the surface with reflections and refractions. As a result, reflections, blurring, and glares from waves affected the oil slick. All we had to do is generate an oil slick diffuse texture with the alpha-channel using Filter Forge.

RUINS

Ruins textures have been created in Photoshop by composing of the photographic textures and the Filter Forge procedural generation.

While performing this task, we wanted to make multipurpose filters to simulate chips and rust streaks, which would be used in the work with architectural structures later.

During the work we have faced the following Filter Forge issues:

- Finished filters or their parts cannot be saved into independent components with their own inputs and outputs and then reused as nodes in other filters (we hope this problem will be solved in the Version 4).

- Each node calculation results are not cached. If you make any change, the whole graph branch will be recalculated but not only the part, affected by this change. Often, filter starts with some complex noise generators, forming the nature and the pattern for a surface, which require no changes in most cases. They are followed by blocks, responsible for color, contrast and blending. Unlike first type, these ones require much more iterations. As the results are not cached, the more filter become complicated the more difficult is to operate it. It forces you to compose final texture in the linear images editors.

Caching would require more memory for calculating, but significantly speed up the calculating itself and allow not to resort to bitmap editors, that will make this software easier-to-use and much more useful.

OCEAN FOAM

In Oil Rush, each trail on a water surface made by moving units, consists on the following particle systems:

- Foam running away from the boat sides

- Foam made by rotating screws (behind a boat)

- Air bubbles below the water surface

- Splashes above the water surface

We have used Filter Forge to create textures for the first two systems.Ocean foam diffuse textures

UNIGINE engine allows to apply texture atlases with multiple various images, which will be randomly assigned to each particle, generated by emitter. It results to the shapes diversity and effects irregularity.

To implement the atlas in Filter Forge, two branches of a filter tree were created. First one is responsible for a foaming water pattern, second – for foam parts visibility mask.

PROCEDURAL GENERATION ROLE IN THE SPECIAL EFFECTS CREATION

When creating effects, a technical artist creates and uses special textures which must be formed according to strict laws. This means bitmap image editors are useless for these purposes in many cases. For example, a frequently used dissolving shader requires a height map for smooth texture disappearing with adjustable low and high color limits. Or, an energy shield, covering an objects with a lightning, to be visually irregular requires to use at least three textures, combined by a complex shader.

Often a technical artist has to implement a brand new effect described by word of mouth or written in concepts. Prior to asking a programmer to make all necessary shaders, he should think about textures and parameters that will be needed to tune such an effect and its visual parts. By using procedural generator for prototyping, the technical artist, even without any shader programming skills, can explain to the programmer what exactly does he want to get, show him a way the effect should be represented and give him all required source textures.

Procedural Generation allows to avoid an unpredictable results and insufficient parametrization of generated effects, and also reduces effects developing time.

CONCLUSION

According to our research, currently there are not so many applications for procedural generation. There are pros and cons of the most mature of them – Filter Forge and Substance Designer, listed below. In our opinion, these two can be used in the real work conditions. Whichever to use depends on disadvantages, you are ready to accept.

FILTER FORGE

Pros

- Comes with the rich and reasonable set of the basic components, that lets to create almost any filter.

- Allows you to create your own components using Lua scripting language.

- User-friendly filter editor.

- Quite handy noise-generating components, forming a basic pattern for almost any surface.

- Free access to a huge filter library. You are very likely to find the filter that meets your needs and edit it if necessary.

Cons

- No GPU acceleration. All the calculations are made via CPU.

- Comes without output component, which allows to put any amount of images to external bitmap files. Also, all this images cannot be saved with the hotkey in the filter editing mode (it is needed for getting the set of all the textures after filter parametrization).

- No Change Tracking. Input textures won’t be automatically updated when their source files are modified.

- Only one buffer in the Lua scripting language. It’s not possible to create and properly use additional buffers in the Lua scripting language (e.g. to make a glowing effect you will need several buffers). The only acceptable method is to use Lua scripting language tables, which faces many problems.

- No filters grouping. It’s not possible to group already existing filters, that may be necessary in more complex filters creation.

- 2D-oriented. No 3D viewport to quickly preview the results.

Feedback

- For source images it would be great to have PSD (with the ability to choose the whole image or a layer) and SVG (for source images to be independent from resolution; this format is handy for masks) formats.

- There is no harm in adding comments blocks – it would be very helpful to comment operations performed by a group of some nodes.

Besides using Filter Forge as the main procedural generator, you probably should try Allegorithmic Substance Designer.

SUBSTANCE DESIGNER

Pros

- Quite a flexible and user-friendly UI, adjustable for your needs.

- A ready-to-use, flexible, and continuously developing tool, Bitmap2Material, helps to generate full set of all necessary textures (e.g. specular, ambient occlusion, height map, normal map, etc.) from the source bitmap image.

- A rich set of the Smart Texture libraries.

- Their own solution, Substance Air, allows to generate textures at runtime from their source graphs (sbsar files).

- Fast calculation algorithms (textures seem to be generated almost in the real-time).

- 3D-oriented. 3D viewport allows to preview the results quickly.

- Allows to bake additional maps (ambient occlusion, cavity etc.) from the model. Allows to re-generate maps just with one click.

- All complex filters (in the terminology of Substance Designer,- “substances”) are based on elementary blocks, that gives you an opportunity to make any necessary change quickly.

Cons

- Poor set of noise generators. This is a main disadvantage, as noise generators form a surface pattern. Basic library must have a necessary and sufficient number of procedural generators. Therefore, prior to solving an assigned task, you have to make your own library of noise generators.

- No scripting languages available to create new nodes.

- Poor set of basic blocks and their sometimes illogical usage cannot allow to fulfill even basic operations.

- Poor basic substances set. In order to get additional libraries you need to pay. Their full cost is much bigger than the cost of the program itself.

SUMMARY

In this part, we have tried to overview issues of generating the large volume of high-quality content for real-time 3D applications in a very short time, provide examples of our solutions and describe possible prospects for further development of procedural content generators.

The second part is dedicated to procedural generating methods used in the content production for Valley benchmark.

USEFUL LINKS

oilrush-game.com — Oil Rush naval strategy game

unigine.com/products/unigine — UNIGINE Engine used in Oil Rush game and Valley benchmark

www.filterforge.com — Filter Forge procedural generator

www.allegorithmic.com — Substance Designer procedural generator

www.spiralgraphics.biz — Genetica procedural generator

www.noisemachine.com/talk1/index.html — Making Noise, Ken Perlin

libnoise.sourceforge.net/index.html — A portable, open-source, coherent noise-generating library for C++

AUTHORS

Andrey Kushner

Vyacheslav Sedovich @slice3d

(technical artists, UNIGINE Corp.)